Wouter Beneke

Marketing Lead at XMPRO

Executives in heavy industry know this pattern well. A pilot shows promise on one line or one plant, but scaling beyond that site proves elusive. What looked like a breakthrough ends up as another proof-of-concept that never pays back.

The numbers back it up. Only 1% of companies call their GenAI rollouts mature, and more than 80% say they aren’t seeing EBIT impact at the enterprise level (McKinsey, Mar 2025). Gartner now projects that 40% of agentic AI projects will be canceled by 2027, and almost a third of GenAI initiatives could be abandoned after proof of concept by 2025.

For mining, manufacturing, energy, and other mission-critical sectors, the risks are even sharper. Safety rules, regulatory demands, and brownfield realities mean shortcuts that work in consumer AI will not survive on the plant floor.

Success is not about running more models. It comes down to breaking through the ten walls that block the path from pilot success to repeatable enterprise value. This guide lays out what those walls are... and how to overcome them with governance, integration, and disciplined execution.

🔍 What You’ll Discover in This Article

This field guide shows industrial executives how to move beyond pilots and achieve enterprise-scale AI with measurable, repeatable value. Inside, you’ll find:

- A Readiness Checklist – 10 yes/no questions to test if your organization is prepared for AI at scale.

- The Top 10 Challenges for deploying Industrial AI at Scale – from pilot purgatory and vendor lock-in to regulatory hurdles and workforce gaps.

- Practical Playbooks – proven solutions with executive actions and KPIs for each challenge.

- A 30-60-90 Day Roadmap – concrete steps to establish governance, deliver value at a lighthouse site, and replicate success.

- The Competitive Reality – why organizations that scale now will lock in advantages rivals cannot reclaim.

But first... Are you ready to even start?

Industrial AI Executive Readiness Check: 10 Yes/No Questions

Before diving into the challenges, assess your organization's readiness with these critical questions:

How to score: Count Yes only if you can point to evidence (doc ID/link or a test date within the last 90 days).

Governance & Value

- Value & scope locked + owner: One high-value use case documented with CFO-verified baseline, success metrics, exit criteria, and a named business owner accountable for results.

- Results wired + finance cadence: Dashboards/event boards stand up before the pilot; counterfactual/control defined; monthly Finance review scheduled (keep/kill rules).

Safety & Control

- Boundaries & HITL: Decision vs execution points and human approvals are documented; no writes to safety-critical systems unless formally approved.

- Rollback ready: Failure modes mapped; tabletop or staged rollback test completed ≤90 days with a target RTO and evidence log.

- Minimal secure access + MOC: Least-privilege accounts; sandbox/IDMZ path; Management of Change window, site permits/inductions, and safety approvals are confirmed.

Data & Integration

- Data path approved: Security-approved method to required signals (export/API/gateway) exists; latency/refresh meet the use case.

- Instrumentation & time base: Needed sensors/tags in place; PTP/NTP and sample rates adequate; historian/event-store API accessible.

- Context & basic quality: Tag dictionary + units complete for pilot assets; basic monitors on critical feeds (completeness, timeliness, validity) with alerts are running.

- Ground-truth available: Labels/outcomes (e.g., failure codes, defect grades, assays, downtime reasons) exist, are trusted, and are mapped to the metrics in #1; a labeling/cleanup plan exists if gaps remain.

Operations & Scale

- SME time & runbook: Named SMEs have protected time; pilot runbook + escalation path (on-call during the pilot window) is agreed and distributed.

Score interpretation: 0–4 = Build foundations first | 5–7 = Pilot with caution | first 8–10 = Ready to scaleThe Top 10 Challenges Stopping Industrial AI at Scale

Challenge 1: The Boil-the-Ocean Trap - Scope Kills More Projects Than Technology

Why It Matters: Executives get excited about AI's potential and try to solve every operational problem simultaneously. This spreads resources too thin, creates impossible complexity, and guarantees failure. The most successful AI programs start with 1-3 targeted, measurable wins.

Common Failure Pattern: Launching 10-15 use cases across multiple plants simultaneously. No clear success criteria for individual projects. Treating AI as a magic solution for decades of operational challenges.

How to Solve It:

- Start with 1-3 targetted use cases that deliver measurable ROI within 3-6 months

- Define specific, quantifiable success metrics for each use case before starting

- Resist the urge to expand scope until you've proven replication capability

Executive Action: Fight the temptation to solve everything at once. Pick winnable battles with clear business value and use those victories to fund broader transformation.

KPI's to Track:

$ Impact (Finance-verified)

- Quarterly cash/EBIT uplift vs target.

- Green ≥100% of target · Amber 70–99% · Red <70%.

Primary value lever delta (pick one per use case)

- Examples: Runtime ↑, Downtime ↓, Throughput ↑, Yield ↑, Quality ↑, Energy ↓.

- Show % improvement vs baseline and vs target.

- Same green/amber/red thresholds as above.

Speed to value (deployment + first value)

- Deployed: go-live in 30–60 days (Green ≤45 · Amber 46–60 · Red >60).

- First value: first Finance-verified impact ≤90 days (Green ≤60 · Amber 61–90 · Red >90).

Challenge 2: The Replication Gap - Pilots That Won’t Scale

Why It Matters: Most pilots never escape pilot purgatory—they work once, then stall before the second site. Enterprise value only shows up when the solution is packaged, parameterized, and repeatable so the next plant can deploy by configuration, not re-engineering, and deliver Finance-verified impact on a predictable clock.

Common Failure Pattern: Teams celebrate a promising PoC, but ship only ad-hoc code and tribal know-how. Each plant then rebuilds integrations, remaps tags and units, and tweaks logic in isolation. Success is declared on accuracy charts while Finance still can’t see repeatable EBIT at the second site. Months pass, costs rise, and momentum evaporates.

How to Solve It (build for scale from day one)

- Design for composability. Package each use case as a reusable, parameterized module/digital twin—core logic stable; site variance in config, not code.

- Abstract the stack. Use a deployment-agnostic integration layer with open protocols & data/model contracts so new sites are configure → deploy, not rebuilds.

- Prove then replicate. Validate at a lighthouse site, then ship a replication kit (containers, IaC, CI/CD, observability, rollback) and enforce a golden path (data-ready → shadow → supervised → production).

Executive Action Shift funding from pilots to capability development. Approve only use cases with a second-site plan + replication kit, and tie scale budget to Finance-verified value at site #2 within 90 days.

KPIs to Track

- Replication lead time: ≤ 90 days (site #1 value-confirmed → site #2 value-confirmed).

- Value replication: site #2 EBIT ≥ 70% of site #1 after 8–12 weeks.

- Cost per replicated site: declining each deployment.

Challenge 3: The AI Trojan Horse Problem - Self-Introduced Autonomy, Exploding Attack Surface

Why it matters: Industrial AI isn’t one thing. You’ll run computer vision on lines, anomaly detection on historians, optimization in planning... and, increasingly, agentic workloads that can take actions autonomously. Even when there’s no autonomy, AI introduces new data paths, model supply chains, inference endpoints, and OT↔IT bridges. That expands the attack surface the moment you leave the lab. Agentic AI is a risk multiplier because tool access plus autonomy can turn a small lapse into a high-impact action—but the underlying risks exist across all AI types.

Common failure pattern: Pilots ship with hard-coded credentials and shared service accounts, minimal permissioning for AI components, and flat or weakly segmented networks. “Temporary” OT access exceptions persist, inference endpoints run outside change control, and there’s no formal threat model, authority/approval matrix, or end-to-end audit trail. When autonomy appears, teams don’t separate reasoning from execution or require human sign-off for high-impact actions—so a single prompt injection, poisoned feed, or leaked token can cascade into plant-wide disruption.

How to Solve It

- Adopt a Decision-Intelligence Layer (DIL) on top of control systems. Observe/analyze/plan in the DIL; execute only via approved gateways into SCADA/DCS—never direct PLC writes from AI. Keep deterministic control loops intact.

- Separate reasoning from execution. “Plan” processes have zero production creds; only whitelisted “act” services can execute with short-lived, least-privilege tokens enforced by a policy engine.

- Enforce OT/IT segmentation. Use IDMZ, allow-listed connectors (e.g., OPC UA/MQTT with mTLS), optional data diodes for one-way flows, and protocol-aware inspection at the boundary.

- Sandbox every tool call. Containerize with egress controls, syscall/network allowlists, and rate limits; block unknown binaries. Log inputs/outputs with immutable audit trails.

- Kill-switch and rollback. Human approval for high-impact actions, bounded autonomy rules, tested rollback/run-safe modes, and an emergency stop independent of AI.

- Secrets & identity hardening. No hard-coded credentials; per-tool service accounts, JIT/JEA access, HSM/secret vault, and zero standing privilege.

- Input integrity & model hygiene. Prompt sanitization and content isolation; signed data contracts/lineage, poisoning detection, and drift/health monitors before/after deployment.

- Prove it in a twin first. Red-team agent scenarios and validate in a digital twin/sandbox aligned to NIST SP 800-82 & ISA/IEC 62443 before touching production.

- Make it someone’s job. Name an AI Security Owner with veto over agent capabilities and changes to execution rights.Executive action: Name a single AI Security Owner with veto power on go-live; require a threat model, authority matrix, and gated execution path before any production cutover (IT and OT sign-off).

KPIs to track:

- Gated execution adoption: % actions routed via approved, sandboxed tools (target ≥95%).

- Blocked unsafe actions: Count per 1,000 actions (expect a rise early, then stabilize).

- Detection/response: MTTD/MTTR for AI-related incidents (trend ↓).

- Coverage: % models/agents inventoried with owner, risk class, signed artifacts, and rollback plan (target ≥95%).

Challenge 4: Regulatory Reality Check - EU AI Act, SOCI, and Compliance Costs

Why It Matters: Regulatory requirements aren't theoretical anymore. General-purpose AI obligations begin August 2025, broader high-risk system requirements phase in through 2026-2027. Australia's SOCI (CIRMP) rules impose material costs: A$1.601B one-off and A$1.076B annual across critical-infrastructure operators.

Common Failure Pattern: AI governance treated as future problem rather than current requirement. No systematic approach to risk classification. Compliance efforts concentrated in legal departments.

How to Solve It:

- Map existing and planned AI use cases to EU AI Act risk categories and compliance timelines

- Implement AI Management System aligned to ISO/IEC 42001 with NIST AI RMF 1.0 governance

- Budget 5-10% of AI program costs for governance infrastructure tied to regulatory dates

Executive Action: Budget compliance infrastructure now. Assign compliance ownership to business units deploying AI, not just legal teams.

KPIs to Track:

- Percentage of AI models with complete risk classification and documentation

- Coverage of AI inventory (all models identified and catalogued)

- Cost of compliance as percentage of total AI investment

Challenge 5: The Data Readiness Gap - When Reality Hits Your AI Dreams

Why It Matters: Organizations discover too late that their data isn't AI-ready. Beyond integration challenges, they face incomplete historical records, inconsistent data quality, inadequate infrastructure, and unclear governance. As Forrester's Michele Goetz emphasizes, data foundations must be solid before AI can scale effectively.

Common Failure Pattern: Assuming existing data is "good enough" for AI. Discovering critical gaps during model training. Missing infrastructure to handle AI-scale processing.

How to Solve It:

- Conduct data readiness assessment before any AI project: quality, completeness, infrastructure capacity

- Establish data contracts defining ownership, access rights, and quality standards across OT/IT boundaries

- Standardize data collection protocols (MQTT/Sparkplug) and semantic models (OPC UA) for future AI needs

Executive Action: Treat data and integration as foundational. Expect 40-60% of initial effort to go to data preparation, standardization, and infrastructure enablement; budget accordingly.

KPIs to Track:

- Data quality scores: completeness, accuracy, consistency across critical sources

- Time to prepare data for new AI use case (target: <30 days)

- Percentage of critical operational data meeting AI-readiness standards

Challenge 6: Vendor Lock-in vs. Composable Architecture

Why It Matters Closed, proprietary platforms trap critical business logic inside one vendor’s ecosystem. That inflates costs, slows down multi-site rollout, and limits flexibility when technology or business needs evolve. In heavy industry, where every site has different systems and integration points, lock-in quickly turns early wins into long-term constraints.

Common Failure Pattern Executives choose a monolithic platform based on a compelling demo. The first deployment works, but business logic is tied to proprietary tools that can’t be lifted out. Every additional use case requires the same vendor’s stack, and switching costs climb with each step. Scaling stalls because flexibility was traded for speed.

How to Solve It

- Design around open standards such as OPC UA and MQTT Sparkplug to ensure portability.

- Put a neutral decision layer between data, AI models, and control systems. This layer should orchestrate capabilities across multiple vendors rather than binding logic to one tool.

- Require exportable schemas and APIs in contracts so business logic and integrations can move freely if needed.

Executive Action Look for platforms that act as an integration and decision backbone rather than a single-vendor ecosystem. The right choice will let you plug in different tools, swap vendors when required, and scale across sites without rewriting logic each time. Evaluate partners on interoperability and governance instead of just functionality in a demo.

KPIs to Track

- Percentage of integrations running on open protocols

- Time required to add or replace vendor components

- Vendor concentration risk across critical AI capabilities

Challenge 7: The Knowledge Exodus - Experience Is Disappearing Faster Than Pilots Can Scale

Why it matters: By 2030, U.S. manufacturing could leave 2.1M roles unfilled, risking ~$1T in lost output (Deloitte + The Manufacturing Institute). For industrial AI, that’s not a distant HR issue... it’s an immediate scale blocker. The veterans who know limits, edge cases, and “how we actually run” are the same people you need to define constraints, sign off safety, tune parameters, and prove value to Finance. When they retire or get spread thin, pilots stall, approvals slip, and site-2 replication slows to a crawl.

Common failure pattern: Pilots lean on a few hero SMEs with a “we’ll document later” promise. Each site re-derives tags/units and safe ranges. Training is a vendor demo, not real apprenticeship. No point-of-work job aids. You get one local win—then Finance still can’t see repeatable EBIT at the second site.

How to solve it (build for scale, not heroics):

- Protect SME time. Name the experts, put them on the plan with SLAs; approvals and artifacts are deliverables.

- Capture the why, not just the what. Constraints, limits, troubleshooting heuristics, tag/units dictionaries—plus rationale. Version-controlled.

- Ship a replication kit with every pilot. Parameter map, safe-ops bounds, value method, rollback runbook, operating playbook.

- AI-assisted apprenticeship. Use sims/digital twins and guided scenarios to compress time-to-competency; feed a searchable knowledge base.

- Point-of-work job aids. Context-aware checklists and limits in HMI/mobile so new teams operate safely on day one.

Executive action: Ring-fence 10–15% of the AI program for knowledge capture & workforce enablement. Appoint a Knowledge Owner per use case. Make “replication kit complete” and “job aids live” part of the go/no-go.

KPIs to track:

- Time to independent competency for target roles (goal: ↓ ~40%).

- Coverage of AI-enhanced SOPs across critical procedures (goal: ≥80%).

- Site-2 stand-up time for the same use case (goal: <90 days).

- SME participation delivered vs. plan (goal: ≥95%).

- Constraint/parameter coverage documented for pilot assets (goal: ≥95%).

Challenge 8: Edge Reality vs. Cloud Promises - Latency, Reliability, and Edge Compute Where It Matters

Why It Matters:Industrial control systems depend on millisecond-level timing and deterministic responses. Cloud-based AI introduces latency, network dependency, and unpredictable costs that can compromise safety, stability, and trust in operations. Decision Intelligence is not designed to replace SCADA or DCS reflexes, but to operate above them... making second-to-minute decisions similar to SMEs.

To succeed, this intelligence must run where timing guarantees can be met, with edge compute supporting critical loops and the cloud reserved for learning and optimization.

Common Failure Pattern: Executives adopt cloud-first AI architectures that assume reliable connectivity and tolerable latency. In practice, timing budgets are missed, responses become unreliable, and network gaps cause downtime. Without graceful degradation, operators lose confidence, and projects stall before they can scale.

How to Solve It:

- Implement a Decision Intelligence Layer above existing SCADA/DCS systems rather than replacing control infrastructure.

- Deploy edge inference for time-critical decisions, ensuring <100ms deterministic responses stay local.

- Use cloud synchronization for model retraining, batch optimization, and fleet-level learning.

Executive Action: Preserve existing control investments while layering intelligence above them. Fund edge compute for critical timing requirements. Require vendors to document latency budgets, availability, and fallback strategies for every AI application.

KPIs to Track:

- System response time compliance within operational requirements

- Percentage of critical loops with deterministic local fallback

- Edge inference accuracy compared to cloud-based models

- Edge uptime and failover success rates

- Model drift detection and rollback time

Challenge 9: Model Drift and Data Integrity – The Hidden Failures That Kill Scale

Why It Matters: Industrial AI models degrade as processes, equipment, and conditions evolve. A model that works at deployment may drift over time, producing incorrect outputs or unsafe recommendations. In mission-critical operations, even small inaccuracies can compromise safety, reduce performance, and erode trust on the plant floor. Without disciplined MLOps practices, AI projects stall after pilots, leaving enterprises with brittle models that never scale.

Common Failure Pattern: Models are deployed once and left unmanaged. Data quality issues creep in, drift goes undetected, and there is no systematic way to update or roll back models. Parameters are hard-coded, making adaptation slow and costly. Operators lose confidence when models generate errors or become irrelevant, and executives conclude that AI “doesn’t work here.”

How to Solve It:

- Implement continuous monitoring for model performance, data quality, and concept drift.

- Deploy MLOps pipelines for automated retraining, with human validation before production rollout.

- Establish versioning, rollback procedures, and documented audit trails.

- Introduce parametricization so SMEs can safely adjust thresholds and ranges without full retrains.

- Combine retraining and parameter tuning within governed MLOps workflows, ensuring both adaptability and compliance.

Executive Action: Treat AI models as living assets, not static deployments. Invest in MLOps capabilities that enforce monitoring, governance, and controlled evolution. Define ownership across IT and operations so there is accountability for keeping models accurate, explainable, and trusted.

KPIs to Track:

- Mean time to detect model performance degradation

- Percentage of models with automated monitoring and alerting

- Ratio of parameter adjustments to full retrains

- Model rollback frequency and success rate

- Number of incidents prevented by drift detection or parameter tuning

Challenge 10: Organizational Inertia - When No One Owns AI, Nothing Scales

Why It Matters: Industrial AI projects often stall because ownership is unclear. IT teams are tasked with leading, but they don’t have the physics-informed judgment, safety awareness, or operational context needed to design autonomy that works on the plant floor. Engineers, meanwhile, are excluded from governance decisions even though they are the ones who understand process physics, safety guardrails, integration constraints, and parametric control across asset lifecycles. Without their leadership, AI logic drifts into impractical designs that break in production. Conversely, when engineers act without IT, solutions remain local prototypes that cannot scale securely across the enterprise. The result is predictable: fragmented authority, slow decisions, and pilots that never progress.

Common Failure Pattern: AI projects assigned to IT alone prioritize infrastructure and compliance but fail in real-world operations. Engineer-led experiments without IT’s governance backbone remain one-off projects. With no shared ownership, coordination collapses, and initiatives get trapped in PoC purgatory.

How to Solve It:

- Establish joint AI steering committees with IT, engineering, operations, and business units.

- Define clear RACI ownership: engineers own autonomous logic, safety guardrails, and operational constraints; IT owns infrastructure, integration, and cybersecurity.

- Align metrics that balance operational performance with enterprise governance, so IT and engineering incentives reinforce each other.

Executive Action: Appoint AI leaders who have credibility in both domains. Build governance models where engineers design the logic of autonomy, while IT secures and scales it.

KPIs to Track:

- Lead time from AI concept approval to production deployment

- Cross-functional collaboration and satisfaction scores

- Success rate of jointly governed AI implementations

- Percentage of projects with clearly defined ownership split between IT and engineering

The Competitive Reality: Why This Matters Now

Competitors are not waiting. While your organization debates ownership, security, or infrastructure choices, others are systematically solving these challenges and compounding operational advantages with every quarter. Once a rival achieves measurable throughput gains or reduces downtime at scale, those gains accumulate relentlessly — and cannot be clawed back.

The organizations that will define the next decade are not those running another round of pilots — they are the ones treating these ten challenges as an integrated transformation agenda. Leaders act now with governance, security, and cross-functional ownership. Laggards hesitate and lose ground permanently.

The choice is in front of you: continue cycling through pilots, or build the operating model that secures your competitive future.

About the Author: Wouter Beneke champions Industrial AI and Autonomous Agentic AI Teams for Industry at XMPro, helping global manufacturers, utilities, and energy companies navigate the shift toward intelligent operations and composable AI architectures.

Appendix: Executive Resource Kit

RACI Matrix for Industrial AI: Clear Authority in Complex Projects

Industrial AI projects fail not because of technology, but because of unclear ownership. When Engineering owns the operational logic, IT owns the infrastructure, Business owns the budget, and CISO owns security—who actually makes the decisions?

The RACI matrix eliminates this confusion by defining exactly four roles for every critical decision:

- Responsible: Does the work

- Accountable: Ensures it gets done (signs off)

- Consulted: Provides input before decisions

- Informed: Stays updated on progress

The Golden Rule: One "R" per row. Multiple people can be Consulted or Informed, but only one person/team should be Responsible for execution, and only one should be Accountable for the outcome.

In industrial AI, this prevents the classic standoff where Engineering says "IT should handle this" while IT says "Engineering needs to decide this." Clear authority accelerates deployment and prevents expensive delays.

Use this matrix as your decision-making template for any industrial AI project—from pilot to production scale.

Engineering owns the "what and why" of AI decisions IT owns the "how and where" of secure, scalable execution

Executive Action Plan: 30-60-90 Day Roadmap

Days 1–15: Establish Foundations

- Rapid readiness check across the ten challenge areas.

- Build an AI inventory and risk register.

- Select 1–2 lighthouse use cases with measurable EBIT potential.

- Define OT/IT data contracts.

- Appoint a single accountable scale owner.

Days 16–30: Build Governance and Guardrails

- Stand up a lightweight, ISO/IEC 42001-aligned management framework.

- Enforce standard protocols at the edge and integration points.

- Deploy a secure, auditable pipeline for AI model deployment.

- Begin structured SME knowledge capture into decision intelligence flows.

Days 31–60: Deliver and Prove Value

- Deploy the first AI capability into the lighthouse site with live monitoring.

- Run agent security and resilience simulations to validate safeguards.

- Document a replication playbook that defines parameters, integrations, and governance checkpoints.

- Publish an EBIT impact dashboard to demonstrate value to stakeholders.

Days 61–90: Scale and Replicate

- Horizontal Scale: Replicate the proven lighthouse use case across one additional site using the replication playbook.

- Vertical Scale: Extend to a second use case at the same site by reusing agents, data streams, and governance frameworks.

- Validate cross-site or cross-use-case EBIT impact to show compounding benefits.

- Refine governance structures and ownership models to support continuous scale.

Research Sources: McKinsey State of AI 2025 (Mar, global) | PwC Global CEO Survey 2025 (Jan, global) | Jobs and Skills Australia 2024 OSL | Australian Government Official Impact Analysis (SOCI costs) | OWASP Top 10 for LLM Applications 2025 | NIST AI Risk Management Framework 1.0 + SP 800-82 Rev.3 | ISO/IEC 42001 | European Parliament Research Service (EU AI Act) | Eclipse Foundation (Sparkplug/MQTT) | Gartner Industrial IoT Research (Aug 2025) | THE Journal (Gartner GenAI forecast) | Technology Magazine (Gartner value framework)

Key Citations:

- McKinsey "State of AI" (Mar 2025): 1% mature rollouts, >80% no enterprise-level EBIT impact

- Gartner forecasts (via Reuters, Jun 2025): >40% agentic AI projects canceled by 2027

- Gartner forecast (via THE Journal): ≥30% GenAI projects abandoned by 2025

- McKinsey/Cris Cunha on mining productivity + AI necessity (The Nightly coverage)

- Gartner value framing (ROE/ROI/ROF) - Mary Mesaglio

In the last 12 months, China deployed 84% more autonomous mining trucks, and now leads globally in industrial automation, with a robot density of 470 units per 10,000 workers... surpassing Germany’s 429.

While Western companies debate governance frameworks, competitors are embedding operational advantages measured in millions of dollars and thousands of hours of prevented downtime.

In this race to build autonomous industries, engineers hold the competitive advantage - if organizations enable them to lead the charge.

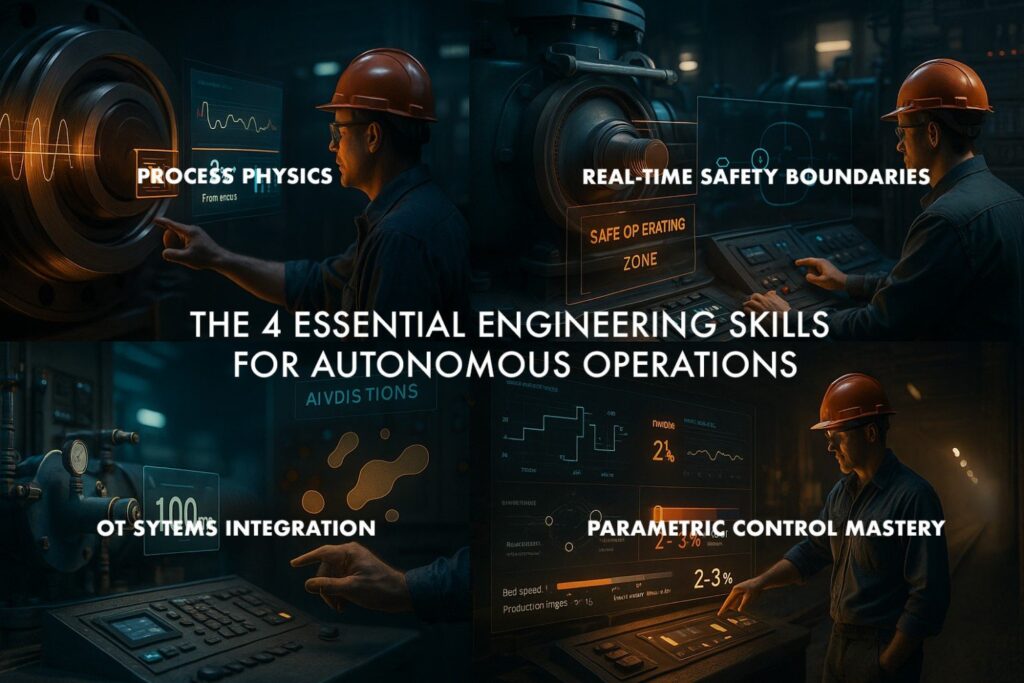

The Four Skills That Make Engineers Essential For Autonomous Operations

1. Process Physics – Turning Data into Physical Truth An engineer knows that when bearing temperature rises 15°F while vibration increases 2.3 mm/sec, it’s not an “interesting data variation”, it’s the onset of mechanical failure. They understand metallurgical limits, thermal expansion rates, and stress propagation patterns that define what is physically possible versus merely statistically probable. Why it matters for autonomy: Without physics-informed judgment, autonomous systems risk chasing false positives or missing early warning signs entirely. Without it: AI may optimize for a pattern in the data while the equipment is quietly heading toward catastrophic failure.

2. Real-Time Safety Boundaries – Knowing Exactly How Far to Push When pressure drops 2% and flow rate jumps 8%, an engineer instantly recognizes the signature of impending pump cavitation and knows precisely which parameters can be adjusted without triggering cascade failures. This judgment comes from years of operating within the real-world limits of interconnected assets. Why it matters for autonomy: Autonomous systems need human-defined guardrails to ensure optimization never compromises safety. Without it: A system might squeeze out marginal efficiency gains while unknowingly accelerating equipment damage or triggering costly shutdowns.

3. Systems Integration Reality – Bridging AI Ambition with Industrial Constraints Engineers know SCADA systems operate at 100 ms response times, PLCs run deterministic cycles, and industrial networks can’t handle probabilistic recommendations in mission-critical loops. They understand the difference between “technically possible” and “operationally feasible” under production constraints. Why it matters for autonomy: This knowledge ensures AI integrates cleanly into the infrastructure that already runs the plant, without disrupting reliability or safety. Without it: Autonomous projects stall or fail in production because the control layer can’t execute the AI’s recommendations in time or within spec.

4. Parametric Control Mastery – Adapting Autonomy Across the Asset Lifecycle As a conveyor belt ages, optimal speed should drop by 2–3% per year due to bearing wear and belt stretch. An engineer instinctively adjusts control parameters based on asset condition, environmental factors, and production targets, a skill refined over decades. Why it matters for autonomy: Engineers ensure AI systems don’t just work when new, but continue to operate optimally as assets age and conditions shift. Without it: Over time, autonomous performance drifts, eroding ROI and creating hidden inefficiencies that compound across the operation.

Bottom line: These four skills are the bridge between AI-driven autonomy and industrial reality. Autonomous transformation succeeds only when the people who understand the physical, operational, and lifecycle constraints of the plant are the ones designing the systems that run it.

Enabling engineers to build autonomous operations: Decision Intelligence as a Layer

Here's what most AI vendors get wrong: They want to replace proven industrial control systems with probabilistic AI. Engineers know this is dangerous.

The Reality: DCS and SCADA systems represent billions in investment, decades of safety certification, and deterministic control logic that keeps people alive. These systems will, and must, remain the authoritative execution layer in industrial operations.

The Breakthrough: Autonomous intelligence operates as a decision layer above existing control infrastructure:

┌─────────────────────────────────────────────┐

│ DECISION INTELLIGENCE LAYER │

│ • Observe: Monitor real-time data │

│ • Optimize: Simulate scenarios │

│ • Orchestrate: Validate recommendations │

└─────────────────────────────────────────────┘

↓

┌─────────────────────────────────────────────┐

│ PROVEN CONTROL SYSTEMS (DCS/SCADA) │

│ • Deterministic execution │

│ • Safety-certified operations │

│ • Mission-critical reliability │

└─────────────────────────────────────────────┘ Architectural Comparison:

Why This Architecture Wins: Engineers can deploy autonomous intelligence without gambling on mission-critical infrastructure. Start with decision support, progress toward automation... all within proven safety boundaries.

Ensuring Engineers Are Building True Autonomy: Why Sequential Multi-Agent Systems Fail in Safety-Critical Environments

Decision Intelligence only delivers safe autonomy when the underlying architecture is built for the realities of industrial control, and that reality is best understood by engineers, not IT departments.

Here’s the uncomfortable truth: Most current Agentic AI “multi-agent” systems today are not true collaborative architectures. They are sequential task chains... agent A hands work to agent B, which passes to agent C. This design collapses under the speed, precision, and safety constraints of industrial environments.

The Determinism Requirement LLM-driven agents produce different outputs for identical inputs. That’s acceptable for drafting emails; it’s unacceptable for controlling assets. If an engineer’s safety model says “increase pressure by 15 PSI” under certain conditions, the recommendation must be identical every time. Safety-critical control requires deterministic logic, something engineers already design for every day.

The Safety Logic Gap Sequential AI lacks embedded industrial safety rules. It doesn’t inherently know that a 3% throughput gain might exceed metallurgical limits or breach environmental permits. Engineers carry this knowledge because they’ve spent years working inside those constraints, and they know how to encode them into control logic.

Real-World Tests Across Industries

- Mining – If haul truck #47 develops a hydraulic fault, multiple agents must coordinate instantly: truck, crusher, maintenance, dispatch, weather, and fuel management agents must work in parallel to avoid downtime.

- Manufacturing – When welding robot #3 detects metal thickness variation, quality agents must coordinate with parts supply, line speed, and upstream stamping in real time to stop defects without halting production.

- Oil & Gas – When offshore platform sensors show pressure anomalies, safety agents must work with production, weather, and emergency response agents simultaneously to evaluate shutdown scenarios while sustaining safe operations.

Sequential Logic vs. Industrial Reality

- Sequential AI: Alert → Analyze → Reschedule → Adjust

- Industrial Operations: All relevant agents act in parallel, share validated data, and reach consensus inside proven safety boundaries.

Why This Matters for Engineers Engineers instinctively reject sequential AI because they understand cascade failure risk. IT teams may see “workflow automation,” but engineers see potential disasters. Building autonomy that works in the real world means embedding engineering judgment, safety logic, and deterministic control from day one, not bolting it on after a failure. That’s why over 60% of IT-led AI projects stall: architecture that ignores engineering realities will always break under operational stress.

IT as the Governance Backbone of Industrial Autonomy

The Decision Intelligence Layer can only deliver safe, reliable autonomy if it runs on an architecture that enforces trust, security, and compliance at scale. That’s where IT moves from an enabling function to the governance backbone of autonomous operations.

In safety-critical industries, the autonomy pipeline doesn’t end at an engineer’s decision model. Every action must pass through a framework that ensures:

- Security and Cyber Resilience – Autonomous systems are prime targets. IT hardens every layer against breaches, prevents rogue agent behavior, and ensures data sovereignty.

- Enterprise Integration – Operational decisions are only as good as the systems they connect to. IT ensures those integrations are robust, tested, and sustainable across ERP, MES, SCADA, and field systems.

- Regulatory Compliance – From environmental permits to export controls, IT keeps autonomous operations inside the law, continuously, not just at deployment.

- Architectural Stewardship – As autonomy evolves to Agentic and Multi-Agent Generative Systems, IT becomes the custodian of the architecture that determines whether those systems are scalable, interoperable, and safe.

In practice, this means engineers define what actions will achieve the operational intent, while IT ensures how those actions are executed securely, predictably, and within proven guardrails.

The partnership isn’t optional. Without IT governance, even the most advanced engineer-built autonomy will stall at scale, undermined by cyber risks, integration failures, or compliance gaps. With it, engineers can build true autonomy on a foundation that will stand up to the speed, complexity, and accountability demands of industrial environments.

The Choice: Lead or Lag behind

The Competitive Reality Check:

While your organization debates AI governance frameworks, competitors are embedding operational advantages that compound relentlessly. Once a rival achieves a major throughput gain across multiple sites, that advantage multiplies quarter after quarter. You can't claw back millions in cumulative savings, thousands of hours of prevented downtime, or the operational agility that comes from autonomous systems learning and improving continuously.

Organizations Enabling Engineer-Led AI:

- Deploy solutions in 30-60 days versus 18-month IT projects

- Achieve measurable operational improvements that compound over time

- Build adaptive systems that get smarter with each operational cycle

- Convert retiring expertise into autonomous systems that work 24/7

The Timeline: Industrial transformation happens in quarters, not years. The organizations that enable engineer-led autonomy now will define the competitive landscape for the next decade.

In Autonomous industry, code alone won't win. Your engineers will.

Sources:

- McKinsey Global Institute (2024): mckinsey.com/mgi/our-research/ai-industrial-applications

- XMPro Industrial AI Research (2024): xmpro.com/mags-the-killer-app-for-generative-ai-in-industrial-applications

- China Industrial AI Market (2025): finance.yahoo.com/news/china-emerges-global-leader-autonomous-133147144.html

- Amiko Consulting Manufacturing AI Report (2025): amiko.consulting/manufacturing-ai-revolution-next-generation-technologies

- U.S. Bureau of Labor Statistics (2024): bls.gov/emp/tables/employment-projections-program.htm

- XMPro Customer Success Data (2024-2025): xmpro.com/case-studies