The Ultimate Guide to Industrial AI: Doing It Right at Scale

A practical playbook for doing Industrial AI right at scale, built on XMPro's composable architecture and Multi-Agent Generative Systems.

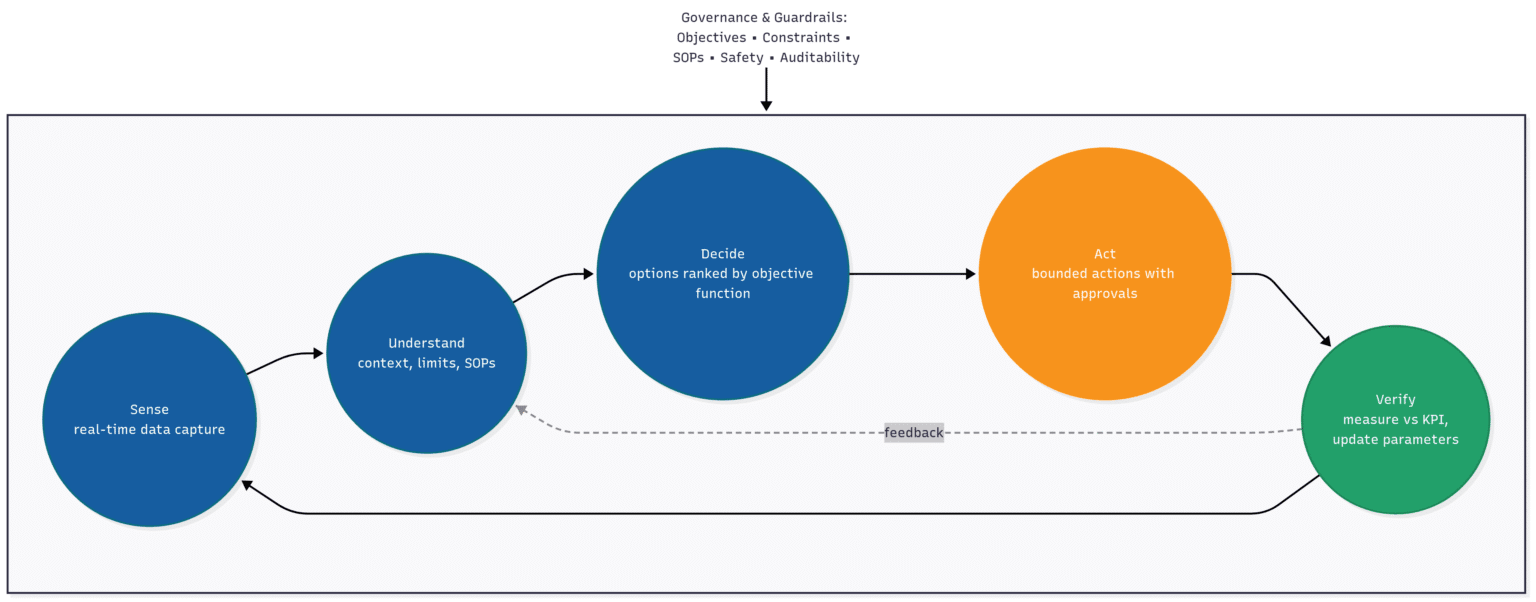

TL;DR: Industrial AI works when it closes the loop: sense, understand, decide, act, verify. Govern decisions with objective functions tied to finance-visible KPIs. Parameterize what changes by site so you deploy by configuration, not code. Progress from support to augmentation to bounded automation with clear guardrails.

Executive Summary

Industrial AI succeeds when it closes decision loops, not when it creates data lakes. This guide provides a field-tested operating model based on four layers: data contracts, context modeling, objective functions, and bounded actions. Companies using this approach report double-digit downtime reductions and seven-figure annualized value. Results come from governed, replicable systems that scale through parameterization.

The Industrial AI Reality Check

Despite heavy investment, many programs stall at pilot. The reason? They prioritize data and models over the decision loop. Industrial AI works when systems sense, understand, decide, act, and verify results against finance-visible KPIs.

Method note: Based on analysis of 50+ industrial AI implementations across manufacturing, mining, and process industries over 2019-2024.

What Industrial AI Actually Means (And What It Isn't)

Industrial AI isn't a data lake filled with historical information or a collection of machine learning models. It's a governed decision system that:

- Observes process and asset state in real-time

- Maintains context through constraints, procedures, and operating limits

- Proposes options with quantified business impact

- Executes bounded actions with complete auditability

📊 Figure 1: The Decision Loop (Diagram showing: Sense → Understand → Decide → Act → Verify, with guardrails at each stage)

This approach transforms how industrial operations handle predictive maintenance, energy management, quality control, and production scheduling.

The Four-Layer Decision-Centric Operating Model

Layer 1: Data Contracts & Event Streaming

Create contracts at the OT/IT boundary (units, sampling, tolerances, quality flags). Normalize and validate streams in Data Stream Designer. Preserve lineage so you can always explain an outcome.

📋 Data Contract Example

Signal: CRUSHER_1_VIB_RMS | Unit: mm/s | Sample: 1s | Tolerance: ±0.1 | Missing: ≤5s → hold_last | Quality: QF=bad → suppress_actionImplemented in Data Stream Designer with complete lineage and automated tests.

Why this matters: When your AI system recommends an action, you can trace back through every data point and transformation that led to that decision. We monitor signal and model drift; if drift exceeds thresholds, actions pause and the system reverts to assist mode while alerting operations. This transparency is crucial for both troubleshooting and regulatory compliance.

Layer 2: Context & Constraints

Raw data becomes actionable intelligence when you add context. This layer models:

- Operating envelopes and safety interlocks

- Standard operating procedures

- Asset hierarchies and relationships

- Production schedules and maintenance windows

The key insight: Context turns predictions into decisions. A vibration spike might indicate normal startup behavior or impending failure—context determines which interpretation drives action.

Layer 3: Decisions & Objective Functions

This layer defines what "good" looks like for your operation through measurable objective functions:

- Throughput optimization (OEE, production rate)

- Energy efficiency (MWh/ton, cost per unit)

- Asset reliability (MTBF, maintenance costs)

- Quality conformance (specification limits, yield)

- Risk management (safety incidents, environmental impact)

🎯 Objective Function Example

Maximize: Throughput - 0.6·EnergyCost - 0.4·QualityPenaltySubject to: SafetyMargin ≥ 15% and SpecYield ≥ 98%This makes trade-offs explicit: energy costs weigh 60% as much as quality penalties in optimization decisions.

Objective functions enable AI to weigh trade-offs between competing priorities and make these trade-offs visible to operators.

Layer 4: Actions & Guardrails

The final layer executes decisions through bounded autonomy. Actions run with rate limits, approvals, and automatic rollback. Every change is logged with inputs, rationale, and expected impact.

Runbook Note: Every action includes rate limits, approvals, and a documented rollback. All decisions and actions are logged with inputs, rationale, and expected impact, exportable as CSV/syslog for enterprise audit. If conditions drift, the system reverts and alerts operations.

This ensures AI systems can act inside clear boundaries. Humans stay in control of critical decisions.

Multi-Agent Generative Systems (MAGS): Agentic AI for Industry

XMPro's Multi-Agent Generative Systems (MAGS) coordinate specialist agents under APEX AI, XMPro's orchestration layer for planning, memory, permissions, and guardrails so agents collaborate safely and explainably. Rather than building monolithic systems, this approach creates focused agents with specific roles:

- Reliability Agent: Detects failure modes, ranks risk, proposes work windows; never alters controls directly

- Energy Management Agent: Optimizes power consumption within safety constraints; cannot override production targets

- Quality Control Agent: Monitors spec conformance, recommends adjustments; requires approval for recipe changes

- Scheduling Agent: Balances production demands with maintenance windows; coordinates with other agents

- Knowledge Synthesis Agent: Captures operational expertise, provides context; advisory role only

How MAGS Agents Collaborate Safely

Each agent operates with:

- Clear role definition and specific objective functions

- Bounded memory and planning scoped to their expertise

- Defined permissions and operational rate limits

- Transparent reasoning that explains recommendations

Agents collaborate through structured protocols while maintaining separation between control logic and execution—the key to safe industrial automation.

Agent Collaboration in Action: The Reliability Agent flags a bearing risk on Pump 7. The Maintenance Coordinator finds a 4-hour window during tomorrow's shift change. The Energy Agent verifies the backup pump won't breach tariff caps. APEX AI routes approvals through the shift supervisor, executes the maintenance schedule, and logs everything with rollback procedures ready.

User Experience Patterns That Actually Work

Even sophisticated AI fails with poor user interfaces. Industrial operators need interfaces that minimize cognitive load and support rapid decision-making:

At-a-Glance Status Displays

- Current operating mode and key performance indicators

- Active constraints and any limit violations

- Deviation alerts with clear severity levels

Recommendations With Reasoning

- Predicted impact of proposed actions

- Constraints or limits that may be affected

- Confidence levels and uncertainty ranges

- Historical context for similar situations

Continuity of Control

- No modal dialogs that trap operators

- Reversible actions with clear undo paths

- Seamless transitions between manual and automatic modes

Context On Demand

- Drill-down capabilities without leaving main screens

- Signal trend analysis and historical comparisons

- Complete audit trails for all decisions and actions

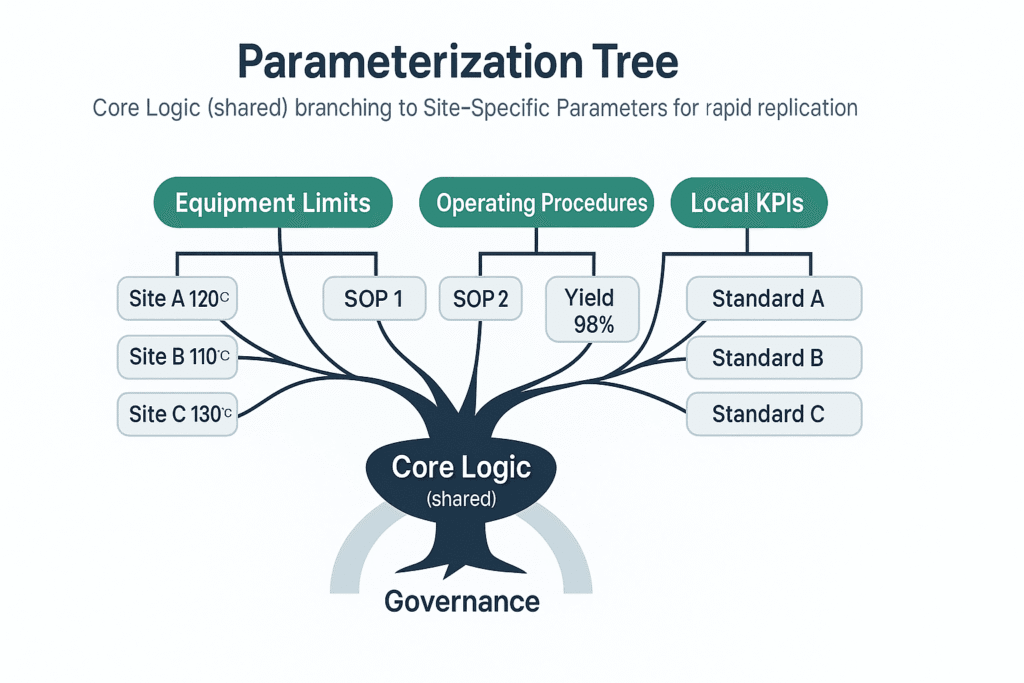

Scaling Through Parameterization, Not Re-Engineering

Package logic once. Vary only site parameters: tag maps, units, limits, calendars, policies, and integrations. Deploy by configuration, not code.

What Varies by Site:

- Tag maps and units (sensor IDs, engineering units, scaling factors)

- Operating limits and safety policies (thresholds, interlocks, alarm setpoints)

- Shift calendars and production windows (schedules, maintenance slots, downtime rules)

- Local approvals and roles (who approves what, escalation paths, permissions)

- System integrations per site (SCADA endpoints, historian connections, CMMS APIs)

🌳 Figure 2: Parameterization Tree

This transforms site replication from a months-long project into a configuration exercise, dramatically accelerating deployment across industrial networks.

The Decision Intelligence Continuum: A Proven Path to Automation

Rather than jumping directly to full automation, successful deployments follow a progressive path:

Decision Support (Weeks 1-4)

- Establish data streams with quality contracts

- Build operator dashboards with clear thresholds

- Define objective functions and baseline KPIs

- Create alerting systems with actionable recommendations

Decision Augmentation (Weeks 5-8)

- Introduce AI agents in advisory mode

- Run shadow recommendations alongside human decisions

- Track impact and refine explanations with subject matter experts

- Build confidence in AI reasoning and reliability

Decision Automation (Weeks 9-12)

- Enable bounded autonomous actions with appropriate guardrails

- Implement approval workflows for higher-risk decisions

- Create audit trails linking actions to business outcomes

- Parameterize successful patterns for replication

Reference Implementation Patterns

Predictive Maintenance → Maintenance Coordination

- Detect failure modes through sensor analysis and historical patterns

- Prioritize maintenance actions by risk level and production impact

- Coordinate with CMMS systems to auto-stage work orders

- Verify completion and update asset reliability models

Energy Management → Cost Optimization

- Forecast energy demand and price fluctuations

- Propose optimal setpoint adjustments within operating windows

- Verify quality and safety constraints remain satisfied

- Schedule changes to minimize cost impact

Quality Control → Process Optimization

- Detect process drift through statistical analysis

- Isolate root causes across multiple process variables

- Advise containment actions and adjusted sampling rates

- Monitor effectiveness of corrective actions

Production Scheduling → Throughput Optimization

- Simulate alternative production scenarios

- Recommend optimal plans considering maintenance windows

- Execute schedule changes with continuous constraint checking

- Adapt to real-time disruptions and demand changes

Pre-Automation Readiness Checklist

Before implementing any autonomous actions, ensure:

- Objective function defined and linked to financial KPIs

- Data contracts established with quality gates passing

- Operating envelopes and procedures formally modeled

- Subject matter expert knowledge captured in rules and playbooks

- Simulation testing completed for normal and edge cases

- Rollback procedures verified and documented

- Approval workflows designed and tested

- Audit trail system operational

Build, Buy, or Compose: Making the Right Choice

- Buy pre-built solutions when addressing commodity problems with standard requirements

- Build custom systems when you need proprietary competitive advantages

- Compose with XMPro when you have mixed legacy systems, real operational constraints, and need to maintain flexibility while delivering immediate value

The composable approach allows you to integrate existing investments while building new capabilities incrementally.

Getting Started: From Concept to Results in 90 Days

Success starts with selecting one decision that impacts this quarter's results. Follow this proven approach:

- Define the objective function and key constraints

- Establish data contracts and quality validation

- Build operator interfaces with clear reasoning displays

- Deploy AI agents in advisory mode first

- Verify explanations and impact with domain experts

- Automate the smallest safe subset of actions

- Parameterize successful patterns for replication

The Future of Industrial Operations

Leading industrial companies are moving beyond traditional automation toward adaptive autonomous operations. This evolution requires AI systems that can:

- Learn from changing conditions and operator feedback

- Explain their reasoning in terms operators understand

- Adapt to new constraints and objectives without reprogramming

- Collaborate safely with human experts and other AI systems

The companies that master this transition will have significant competitive advantages in efficiency, quality, and responsiveness.

Real-World Impact: Proven Results Across Industries

Impact Methodology: Impact is measured against pre-deployment baseline and finance-visible KPIs (downtime avoided, yield, energy). We attribute conservatively and publish assumptions. Results verified by customer finance teams over 6-12 month measurement windows.

Companies using this decision-centric approach have achieved:

- Double-digit downtime reductions through predictive maintenance (verified: 15-35% range)

- Seven-figure annualized value within deployment year (finance-verified at site level)

- 80% fewer equipment failures for targeted failure modes (anonymized customer: global mining company)

- 95% reduction in alarm noise while improving response times

- Finance-verified impact measured against conservative baselines

These results come from treating AI as a decision-making partner rather than just an analytical tool.

Frequently Asked Questions

What is an objective function in Industrial AI? An objective function defines what "good" looks like mathematically, balancing competing priorities like throughput, energy costs, and quality. Example: Maximize Throughput - 0.6·EnergyCost - 0.4·QualityPenalty.

How do data contracts reduce deployment risk? Data contracts establish agreements about units, sampling, tolerances, and quality flags at the OT/IT boundary. This prevents "garbage in, garbage out" scenarios and ensures AI recommendations are based on validated data.

What is bounded autonomy? Bounded autonomy lets AI systems act within defined limits while humans retain control over critical decisions. Actions are gated by policies, approvals, and automatic rollback capabilities.

How do you replicate a use case to a second site? Through parameterization: package logic once, then vary only site-specific parameters like tag maps, limits, and policies. This turns replication into a configuration task rather than a development project.

Does this work with air-gapped/on-premise deployments? Yes. XMPro iBOS supports edge-native deployment with local AI models, ensuring data never leaves your network while maintaining full functionality and security compliance.

Ready to Transform Your Industrial Operations?

The shift from reactive to predictive to autonomous operations isn't just a technology upgrade. It's a competitive necessity. Companies that successfully implement decision-centric AI will outperform those stuck in manual processes or failed pilot projects.

Take the first step: Identify one critical decision in your operation that impacts safety, quality, throughput, or costs. Define its objective function, establish data quality, and let AI assist with recommendations before automating actions.

Primary Action: Schedule a 30-minute consultation to see how decision-centric AI works in your specific environment.

In 30 minutes, you'll get: (1) an objective-function draft for your highest-value decision, (2) a site-parameterization checklist, (3) a timeline to first assist-mode action.

Secondary Action: Download the Site Replication Checklist - A practical CSV template for parameterizing and scaling your first successful use case.

Want to see how this approach works with XMPro's Agent Library and Multi-Agent Generative Systems? Our team can walk you through value-generating decision loops that pay for themselves.

XMPro's Intelligent Business Operations Suite (iBOS) enables rapid deployment of decision-centric AI across manufacturing, mining, oil & gas, utilities, and process industries. Our Multi-Agent Generative Systems (MAGS) and APEX AI platform have helped Fortune 500 companies achieve measurable ROI through safer, smarter industrial automation.